Congestion Control is also known as TCP Congestion control. TCP refers to Transmission Control Protocol that uses a network congestion-avoidance algorithm.

It includes various aspects of an additive increase and multiplicative decrease scheme, along with other schemes including slow start and congestion window, to achieve congestion avoidance.

Today we have shared a brief introduction about congestion control in computer networks, bucket algorithm, process, and technique of congestion control.

What is Congestion Control?

When message traffic is so intense that network response time is slowed, a condition occurs on the network layer.

- Congestion control Effects

- Performance suffers as the delay lengthens.

- Retransmission occurs as the delay increases, worsening the problem.

- Algorithms for congestion control

- Algorithm for a Leaky Bucket

Let’s look at an example to help you understand.

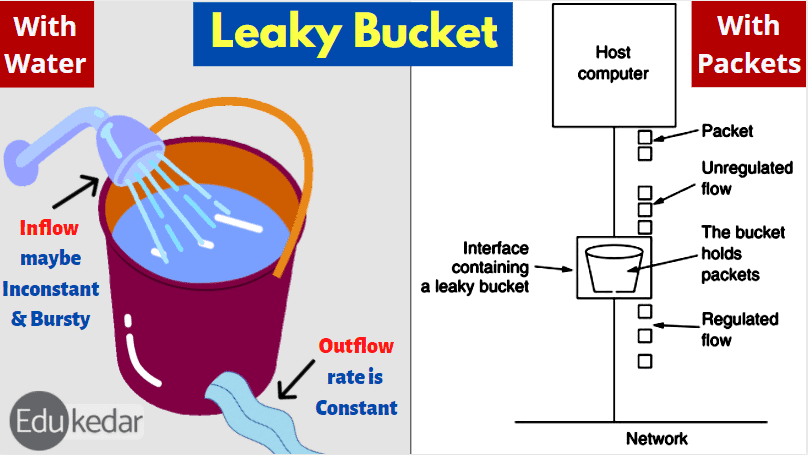

Consider a bucket with a small hole in the bottom. The outflow stays constant regardless of the pace at which water enters the bucket. When the bucket is full, any more water entering flows over the sides and is lost.

Bucket with a Leak

Similarly, each network interface has a leaky bucket, and the leaky bucket method has the following steps:

- When the host wishes to send a packet, it is placed in the bucket.

- The bucket leaks at a consistent rate, implying that the network interface sends packets at the same rate.

- The leaky bucket converts bursty traffic to consistent traffic.

- In practice, the bucket is a finite queue with a finite rate of output.

- Algorithm for token buckets

Token Bucket Algorithm in Congestion Control:

No matter how bursty the traffic is, the leaky bucket method enforces the output pattern at the average rate. So, in order to deal with bursty traffic, a flexible algorithm is required to ensure that data is not lost. Token bucket algorithm is one such algorithm.

Steps in congestion control algorithm are as follows;

- Tokens are thrown into the bucket at regular intervals.

- The bucket can hold a maximum amount of water.

- If a ready packet exists, a token from the bucket is withdrawn, and the packet is dispatched.

- The packet cannot be sent if there is no token in the bucket.

Let’s have a look at an example.

A bucket containing three tokens and five packets awaiting transmission. A packet must capture and destroy one token before it can be broadcast. Three of the five packets have made it through, but the other two are stuck waiting for further tokens to be created, as seen below.

The following are some of the reasons why a token bucket is preferable over a leaky bucket:

The leaky bucket algorithm is a conservative technique that limits the pace at which packets are introduced into the network. The token bucket algorithm now has some flexibility.

At each tick, algorithm tokens are generated in the token bucket (up to a certain limit).

An incoming packet must capture a token before being transferred, and the transmission must occur at the same rate.

As a result, if tokens are available, part of the busty packets are transmitted at the same rate, giving the system some flexibility.

M * s = C + * s = M * s = M * s = M * s = M * s = M * s

Where, S — denotes the amount of time spent

M — stands for maximum production rate.

C — Byte capacity of the token bucket

Must Read ➜ Recursion Function in Python

Congestion Control Techniques:

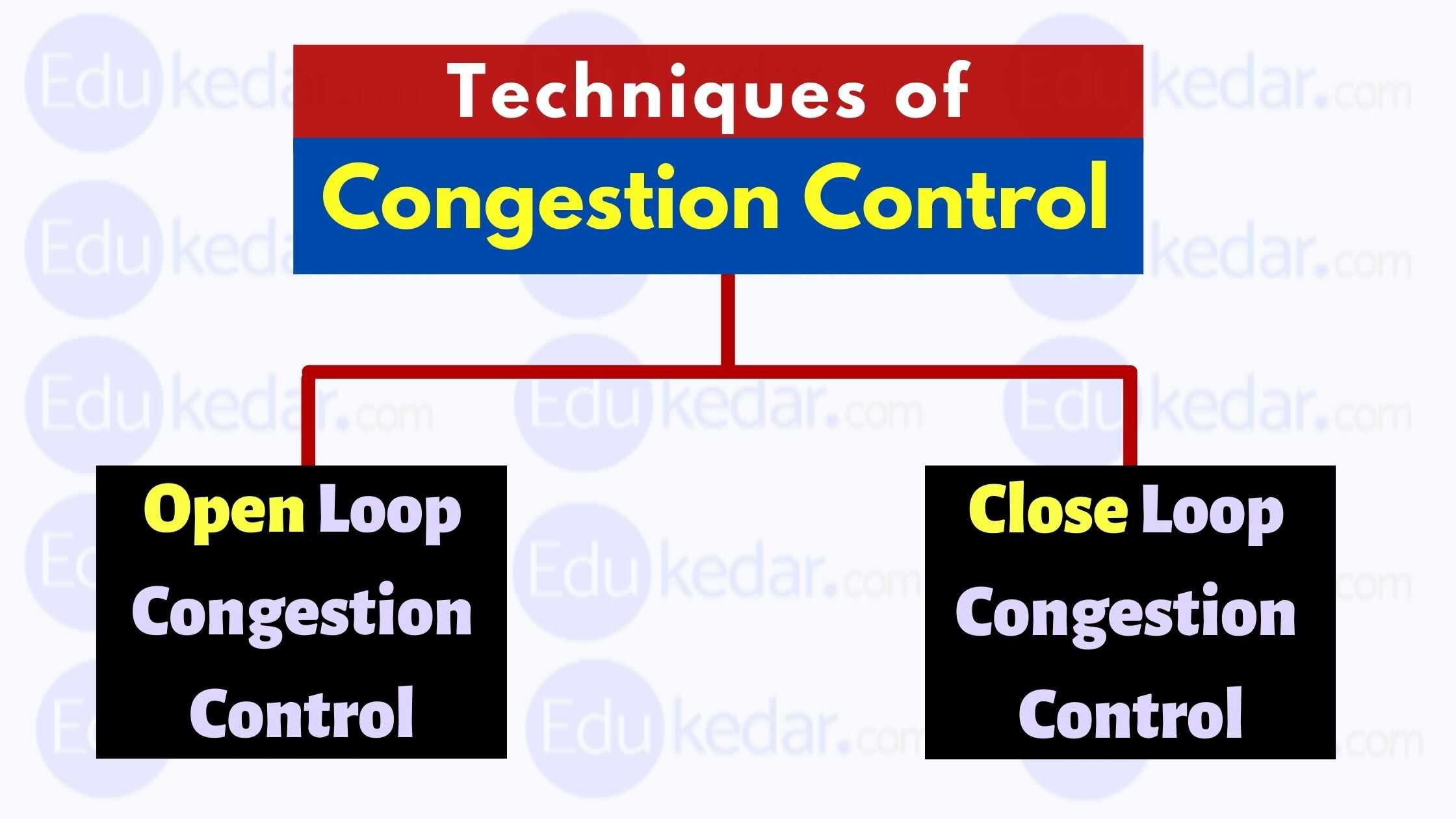

Congestion control refers to the methods used to reduce or eliminate traffic congestion. Techniques for reducing traffic congestion can be divided into two groups:

- Open Loop Congestion Control

- Closed Loop Congestion Control

1. Controlling Congestion in an Open Loop

Congestion control rules with an open loop are used to prevent congestion before it occurs. The source or the destination is in charge of congestion control.

Policy on Retransmission:

It is the policy that ensures that packets are retransmitted. If the sender believes a packet has been lost or corrupted, the packet must be resent. The network may become more congested as a result of this broadcast.

Retransmission times must be designed to avoid congestion while also being able to maximize efficiency.

Policy on Windows:

Congestion may also be affected by the type of window on the sender side. Although some packets may be successfully received at the receiver side, several packets in the Go-back-n timeframe are resent. This duplication has the potential to worsen the network’s congestion.

As a result, the Selective Repeat Window should be used since it sends the precise packet that was missed.

Disposal Policy :

A useful discarding policy employed by routers is that it allows them to avoid congestion while also partially rejecting corrupted or less sensitive packages while maintaining message quality.

When transmitting audio files, routers can discard less sensitive packets to avoid congestion while maintaining the audio file’s quality.

Policy on Acknowledgement:

Because recognition is a component of network load, the acknowledgment policy imposed by the receiver may have an impact on congestion. Congestion caused by acknowledgment can be avoided using a variety of methods.

Rather than sending an acknowledgment for a single packet, the receiver should send an acknowledgment for N packets. Only when a packet must be sent or a timer expires should the recipient provide an acknowledgment.

Admissions Procedures:

A mechanism should be utilized in admission policy to reduce congestion. Before transmitting a network flow farther, switches in a flow should assess its resource requirements. To avoid further congestion, the router should prohibit establishing a virtual network connection if there is a probability of congestion or if the network is already congested.

All of the policies listed above are implemented to prevent network congestion before it occurs.

Must Read ➜ Types of Routing Protocols

2. Congestion Control in a Closed Loop

After congestion has occurred, the closed-loop congestion control technique is employed to treat or alleviate it. Different protocols employ a variety of strategies, including the following:

Pressure on the back:

Backpressure is a method of preventing a crowded node from accepting packets from an upstream node. This may lead the upstream node or nodes to become overburdened, preventing data from being received from the above nodes. Backpressure is a congestion control strategy that spreads in the reverse direction of data flow from node to node.

The backpressure approach can only be used on virtual circuits in which each node knows knowledge about the node above it.

Back stretching :

In the picture above, the 3rd node becomes overcrowded and stops receiving packets, causing the 2nd node to become congested as the output data flow slows. Similarly, the 1st node may become overburdened and alert the source to slow down.

Technique for Choke Packets:

Both virtual networks and datagram subnets can benefit from the choke packet strategy. A choke packet is a message delivered by a node to the source informing it that the network is congested. Each router keeps track of its resources and how many of its output lines are in use.

The router sends a choke packet to the source anytime resource use exceeds the threshold value defined by the administrator, giving it feedback to minimize traffic. Congestion is not reported to the intermediate nodes via which the packets passed.

Packet choke:

Implicit Signaling is a term that refers to a type of signaling that is :

There is no communication between the congested nodes and the source in implicit signaling. The source speculates that a network is congested. When a sender sends multiple packets and does not receive an acknowledgment for a long period of time, one assumption is that there is congestion.

Explicit Signaling is a term that refers to a type of signaling that is :

In explicit signaling, if a node encounters congestion, it can send a packet to the source or destination to tell the source or destination about the congestion. The difference between choke packet and explicit signaling is that with explicit signaling, the signal is contained in the data packets rather than creating separate packets as in the choke packet.

Explicit signaling can take place in either a forward or reverse manner.

Forward Signaling:

A signal is transmitted towards the direction of the congestion in forwarding signaling. There is a congestion warning for the destination. In this instance, the receiver adopts policies to prevent future congestion.

Reverse Signaling:

A signal is transmitted in the opposite direction of the bottleneck in backward signaling. The source has been advised to slow down due to traffic congestion.