Bits can be corrupted while being transported over a computer network owing to interference and network difficulties. Errors are caused by corrupted bits, which cause the receiver to receive erroneous data. Parity Check, Checksum, and Cyclic Redundancy Check (CRC) are the three basic approaches for detecting flaws in data frames Cyclic Redundancy Check (CRC).

Error detection techniques are in charge of determining whether or not an error occurred in the frame that was transmitted across the network. The number of error bits and the type of mistake are not taken into account.

The sender must send some extra bits in addition to the data bits in order to detect errors. Based on the additional redundant bits, the receiver performs appropriate checks. If the data is error-free, the unnecessary bits are removed before the message is passed to the top layers.

Cyclic Redundancy Check Meaning

A cyclic redundancy check (CRC) is an error-detection code that is extensively used in digital networks and storage devices to detect unintentional data changes. A short check value is applied to blocks of data entering these systems, based on the remainder of a polynomial division of their contents.

The calculation is performed upon retrieval, and if the check values do not match, corrective action against data corruption can be done.

Cyclic Redundancy Check CRCs can be used to repair errors (see bit filters).

The algorithm is based on cyclic codes, and the check (data verification) value is a redundancy (it increases the message without adding information). CRCs are popular because they are straightforward to construct in binary hardware, mathematically evaluate, and are particularly good at identifying typical errors caused by noise in transmission channels.

The function that generates the check value is sometimes employed as a hash function because it has a defined length.

Cyclic Redundancy Check Program

The theory of cyclic error-correcting codes underpins CRCs. W. Wesley Peterson proposed the use of systematic cyclic codes, which encrypt messages by adding a fixed-length check value, for the purpose of error detection in communication networks in 1961.

Cyclic codes are not only simple to use but are also ideally adapted to detecting burst errors, which are contiguous sequences of erroneous data symbols in communications. Burst faults are common transmission faults in many communication channels, including magnetic and optical storage technologies, therefore this is crucial.

An n-bit Cyclic Redundancy Check CRC applied to a data block of any length will typically identify any single error burst not longer than n bits, with (1 2n) being the percent of all longer error bursts it will detect.

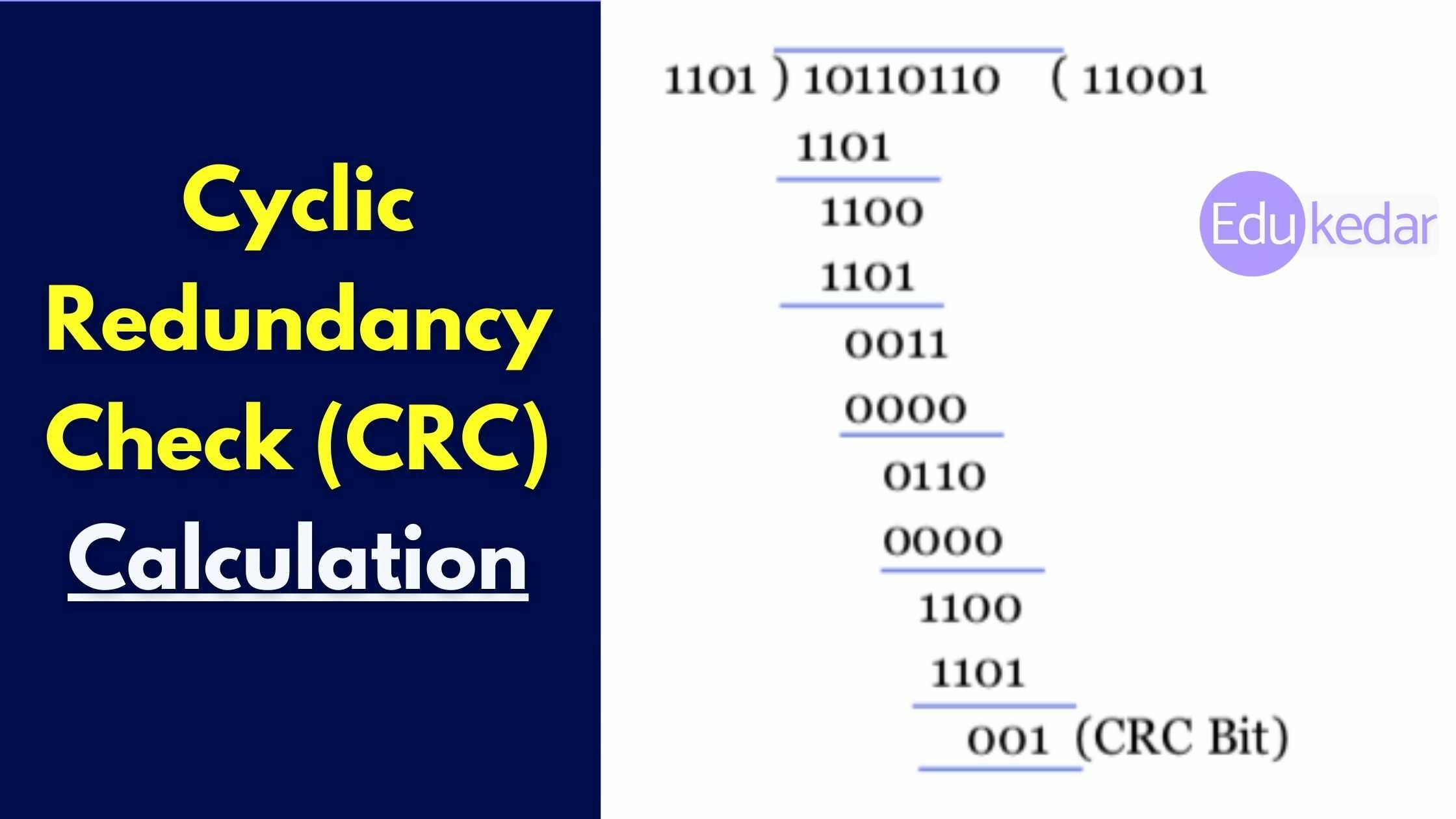

The definition of a so-called generator polynomial is required for the specification of a CRC code. This polynomial is used as the divisor in a polynomial long division, in which the message is used as the dividend, the quotient is discarded, and the remainder is used as the result.

The crucial caveat is that because the polynomial coefficients are produced using finite field arithmetic, the addition operation can always be done bitwise parallel (there is no carry between digits).

In practice, all commonly used CRCs use the Galois field, or, to put it another way, a two-element finite field, GF (2). The two parts are commonly referred to as 0 and 1, which is a good match for computer architecture.

When the check value of a CRC is n bits long, it is referred to as an n-bit CRC. Multiple CRCs with different polynomials are conceivable for a given n. This polynomial has the maximum degree n, implying that it has n + 1 terms.

In other words, the polynomial is n + 1 bits long and requires n + 1 bits to encode. Because the MSB and LSB are always 1 in most polynomial specifications, they are usually omitted. The CRC and related polynomial are usually referred to as CRC-n-XXX, as shown in the table below.

The parity bit, the simplest error-detection scheme, is actually a 1-bit CRC: it employs the generator polynomial x + 1 (two terms) and is known as CRC-1.

Must Read ➜ What is HTTP?

Data Error Cyclic Redundancy Check (CRC)

CRCs are meant to safeguard against common sorts of mistakes on communication channels, where they can provide speedy and reasonable assurance of message integrity. They are not, however, enough for preventing data tampering on purpose.

To begin with, because there is no authentication, an attacker can change a message and recompute the CRC without being discovered. CRCs and cryptographic hash functions, while stored alongside data, do not protect against purposeful data change.

Cryptographic authentication measures, such as message authentication codes or digital signatures, must be used by any application that requires protection against such assaults (which are commonly based on cryptographic hash functions).

Second, unlike cryptographic hash functions, the CRC function is easily reversible, making it unsuitable for use in digital signatures.

One more thing is that CRC is a linear function.

As a result, even if the CRC is encrypted with a stream cipher that uses XOR as its combining operation (or a mode of a block cipher that effectively turns it into a stream cipher, such as OFB or CFB), the message and its associated CRC can be manipulated without knowing the encryption key; this was one of the well-known design flaws of the Wired Equivalent Privacy (WEP) protocol.

CRC based Encoding

The size of the communication block and the CRC divisor is agreed upon by the communicating parties. CRC (7, 4) is one example of a block, with 7 being the total length of the block and 4 being the number of bits in the data segment. 1011 is a possible divisor.

The data segment is binary divided by the divisor by the sender.

The remaining CRC bits are then appended to the end of the data segment. As a result, the generated data unit is divisible by the divisor exactly.

Must Read ➜ Application Layer Protocols

The process of Decoding

The divisor is used by the receiver to divide the incoming data unit

The data unit is assumed to be correct and accepted if there is no residual.

Otherwise, it’s assumed that the data is tainted and will be discarded. The receiver may then send the sender an incorrect acknowledgment for retransmission.